Delta Lake Explained

Architecture, Benefits, Comparisons, and Real-World Examples

Modern organizations generate massive volumes of data every day. Managing that data reliably—while keeping it fast, accurate, and scalable—is a challenge. This is where Delta Lake plays a critical role.

This guide explains what Delta Lake is, how it works, why it matters, and how it compares to traditional data lakes and data warehouses, using clear explanations and real-world examples.

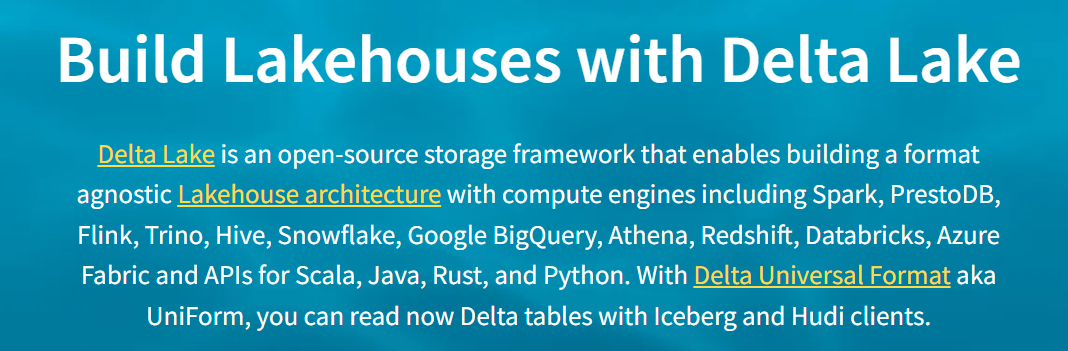

What Is Delta Lake?

Delta Lake is an open-source data storage framework that brings reliability, performance, and governance to data lakes. It is designed to sit on top of cloud storage systems such as Amazon S3, Azure Data Lake Storage, or Google Cloud Storage.

At its core, Delta Lake adds database-like features to a data lake, including:

ACID transactions

Schema enforcement and evolution

Time travel and versioning

Unified batch and streaming processing

Delta Lake was originally created by Databricks and is deeply integrated with Apache Spark.

Why Delta Lake Exists

Traditional data lakes store raw files (Parquet, JSON, CSV) but lack strong guarantees. This often leads to:

Corrupted or partially written files

Conflicting updates from multiple users

Schema drift and broken pipelines

Difficult debugging when data changes unexpectedly

Delta Lake solves these issues by adding a transaction log that tracks every change to the data.

How Delta Lake Works

1. Transaction Log (Delta Log)

Every table in Delta Lake has a _delta_log directory that records:

Inserts, updates, and deletes

Schema changes

Metadata and table versions

This log ensures atomic, consistent, isolated, and durable (ACID) operations—something traditional data lakes cannot guarantee.

2. ACID Transactions

With Delta Lake, multiple jobs can safely read and write data at the same time. If a write fails, the transaction is rolled back automatically.

Example:

A streaming job is ingesting sales data while an analytics job is querying totals—both run safely without corrupting the dataset.

3. Schema Enforcement and Evolution

Delta Lake prevents unexpected schema changes from breaking pipelines.

Schema enforcement blocks invalid data

Schema evolution allows controlled schema updates

Example:

If a new column like discount_amount appears in incoming data, Delta Lake can automatically add it—without manual intervention.

4. Time Travel (Data Versioning)

One of the most powerful features of Delta Lake is time travel.

You can query:

Previous versions of a table

Data as it existed at a specific timestamp

Example use cases:

Recovering from accidental deletes

Auditing historical data

Comparing model results across time

SELECT * FROM sales VERSION AS OF 15;

Delta Lake Architecture Explained

Most Delta Lake implementations follow the Medallion Architecture:

Bronze Layer

Raw, ingested data

Minimal transformation

High volume, unfiltered

Silver Layer

Cleaned and validated data

Deduplicated records

Business logic applied

Gold Layer

Aggregated, analytics-ready data

Optimized for reporting and dashboards

This layered approach improves data quality, performance, and maintainability.

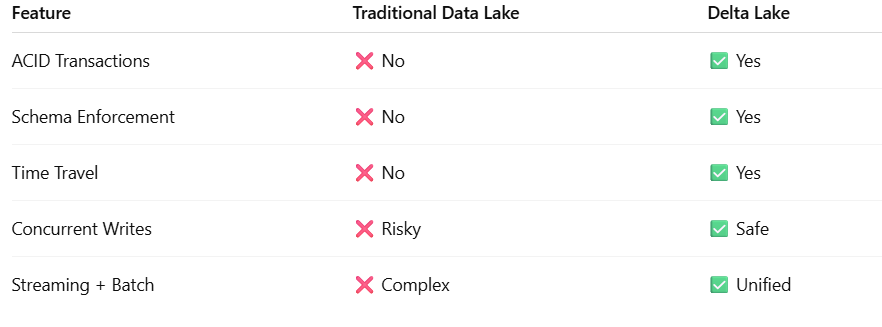

Delta Lake vs Traditional Data Lake

A traditional data lake focuses on storage. Delta Lake focuses on trust and reliability.

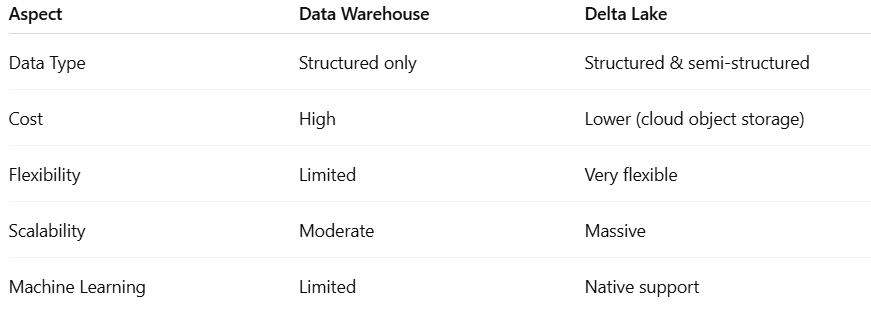

Delta Lake vs Data Warehouse

Many organizations now use Delta Lake as a lakehouse, combining the strengths of data lakes and data warehouses into one platform.

Real-World Use Cases of Delta Lake

1. Business Intelligence & Analytics

Companies use Delta Lake to power dashboards in tools like Power BI and Tableau, ensuring accurate and up-to-date metrics.

2. Streaming Data Pipelines

IoT devices, clickstream data, and financial transactions are streamed directly into Delta Lake with guaranteed consistency.

3. Machine Learning & AI

Data scientists use Delta Lake for feature stores, model training datasets, and reproducible experiments.

4. Data Governance & Auditing

Time travel and transaction logs make Delta Lake ideal for regulated industries like healthcare and finance.

Delta Lake Example Scenario

E-commerce Company

Raw order events land in Bronze

Cleaned customer and product data move to Silver

Revenue, sales trends, and KPIs are built in Gold

Analysts query dashboards

Data scientists train demand forecasting models

Engineers roll back data instantly if an error occurs

All from one Delta Lake platform.

Why Delta Lake Is So Popular

Organizations choose Delta Lake because it:

Eliminates data corruption

Simplifies data pipelines

Scales with business growth

Supports analytics, BI, and ML together

Reduces infrastructure complexity

It is widely adopted across cloud platforms, including Azure Data Lake Storage, Amazon S3, and Google Cloud Storage.

Final Thoughts

Delta Lake transforms raw cloud storage into a reliable, enterprise-grade data platform. By combining ACID transactions, schema control, time travel, and high performance, it enables organizations to trust their data while moving faster.

For teams struggling with unreliable pipelines, schema chaos, or disconnected analytics systems, Delta Lake offers a proven and scalable solution that bridges the gap between data lakes and data warehouses—without compromise.

Thank you for reading this blog article.

If you like this blog and want to read more feel free to follow me on Medium. More to come and thanks for the support.

You can find my Medium blog articles here

If you’d like to explore my books, please visit my Amazon Author Page through the link below.

👉 Visit my Amazon Author Page to explore all titles:

https://www.amazon.com/stores/Dennis-Duke/author/B0DVQSM1Q8